What Is an Algorithm? The Soul of Every Program

A practical, language-spanning tour of what algorithms are, how they’re structured, why Big-O matters, and how to implement and analyze a simple min/max routine from pseudocode to production-grade code.

The Night the Printer Finally Behaved

It was 12:47 a.m. when the PLA dragon actually finished. The first two prints failed, and not because the universe hates me. My slicer rules were wrong: travel moves, cooling, infill, all conspiring into a reliable recipe for spaghetti. Change the steps, change the outcome.

That moment when the third print finally came out perfect—that's the satisfaction of getting an algorithm right. That's an algorithm in the wild: a finite set of precise instructions that takes an input and, if you don't mess it up, halts with the result you wanted. The formal definitions say the same thing with less caffeine: unambiguous steps, defined inputs, guaranteed termination, and predictable outputs 1 2 11.

What “Algorithm” Means in Practice

An algorithm is a plan the computer can follow without guessing. Same inputs, same outputs. That predictability is the cornerstone of dependable software. Designing one well means you do three things clearly: define your inputs, write steps the machine can execute without interpretation, and state when the process stops. Determinism isn’t optional here; it’s the contract that makes systems testable 3.

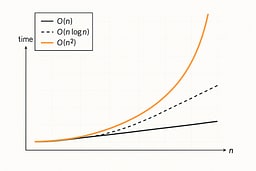

Scale Before Style: Big-O in Plain English

You can write five beautiful lines and still ship a slow program. Big-O gives you a map of how work grows as inputs grow. If your algorithm is O(n), doubling the input roughly doubles the time. O(n log n) grows faster, O(n²) faster still, and so on. That's why we don't celebrate clever one-liners unless they also scale. Good algorithms read cleanly and behave well when the data gets ugly 4 5 6 11.

Why Big-O Matters: Real Numbers

Note: The timings below are order-of-magnitude estimates for illustrative purposes. Actual performance depends on hardware, compiler optimizations, data characteristics, and system load. These values show the relative scaling of complexity classes, not absolute guarantees. Think of them as "ballpark figures" that help you understand the growth patterns, not precise measurements.

| Input Size | O(n) | O(n log n) | O(n²) |

|---|---|---|---|

| 1,000 items | 1ms | 10ms | 1 second |

| 100,000 items | 100ms | 1.5 seconds | 2.8 hours |

| 1,000,000 items | 1 second | 20 seconds | 11.6 days |

Rule of thumb: If you're processing user data, O(n²) will eventually timeout. If you're processing logs, it will crash your server.

Time vs Space: The Trade-off

Our FindMaximum uses O(1) space (one variable, largest). Some algorithms use O(n) space (like creating a sorted copy) to achieve better time complexity. Always ask: "Can I afford the memory?"

A Tiny Problem with Big Lessons

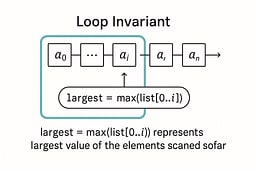

Let's find the largest number in a list. It's honest work: simple, testable, and it teaches invariants and linear scans without getting cute.

When You'd Actually Use This

- Leaderboards: Finding the highest score in a game

- Analytics: Maximum daily active users in a time series

- Resource allocation: Largest file in a directory before upload

- Data validation: Ensuring values don't exceed a threshold

Pseudocode I actually use

Algorithm FindMaximum(list)

require list is non-empty

largest ← list[0]

for each item from index 1 to end

if item > largest then

largest ← item

return largest

I like this because the invariant is obvious: at any point, largest is the maximum of the elements we've already seen. One pass, constant extra space, nothing to memorize.

How I write it in real languages

Now that we understand what algorithms are and why complexity matters, let's implement a real algorithm in multiple languages to see how the same logic adapts to different ecosystems. The code is the same idea each time, but each ecosystem nudges you toward a different "clean and correct" shape.

C (C17): control everything, assume nothing

// find_max.c

// Compile: cc -std=c17 -O2 -Wall -Wextra -o find_max find_max.c

// Run: ./find_max

#include <stdio.h>

#include <stddef.h>

int main(void) {

int numbers[] = {3, 17, 9, 25, 4};

size_t n = sizeof numbers / sizeof numbers[0];

if (n == 0) { // avoid UB from numbers[0]

fputs("Error: empty array\n", stderr);

return 1;

}

// Invariant: At this point, 'largest' contains the maximum

// of all elements we've seen so far (indices 0 through i-1)

int largest = numbers[0];

for (size_t i = 1; i < n; i++) {

if (numbers[i] > largest) {

largest = numbers[i];

}

}

printf("Largest number: %d\n", largest);

return 0;

}

C rewards paranoia. I compute n safely, guard against an empty array so I never read numbers[0] out of bounds, and keep the loop body tiny so the invariant stays visible. No heap, no I/O surprises, no macros pretending to be functions.

C++20: let the standard library say the quiet part out loud

// find_max.cpp

// Compile: c++ -std=c++20 -O2 -Wall -Wextra -o find_max_cpp find_max.cpp

// Run: ./find_max_cpp

#include <algorithm>

#include <array>

#include <iostream>

int main() {

const std::array<int, 5> numbers{3, 17, 9, 25, 4};

if (numbers.empty()) {

std::cerr << "Error: empty array\n";

return 1;

}

auto it = std::max_element(numbers.begin(), numbers.end());

std::cout << "Largest number: " << *it << "\n";

return 0;

}

std::max_element is exactly the loop I’d write by hand, except it has decades of eyes on it. Iterators make the intent explicit, and the algorithm name documents the choice better than comments ever will 4 5.

Python 3.11+: readable first, but show your invariant

# find_max.py

# Run: python3 find_max.py

numbers = [3, 17, 9, 25, 4]

if not numbers:

raise ValueError("empty list")

# Invariant: At this point, 'largest' contains the maximum

# of all elements we've seen so far (indices 0 through i-1)

largest = numbers[0]

for n in numbers[1:]:

if n > largest:

largest = n

print("Largest number:", largest)

Yes, max(numbers) exists. I like the explicit loop when I’m teaching or reviewing because it makes the invariant obvious and keeps the complexity discussion honest. For production code with clear constraints, I’ll happily switch to max() and add a guard for ValueError on empty input.

Go 1.22+: nothing fancy, just fast and clear

// find_max.go

// Build: go build -o find_max_go ./find_max.go

// Run: ./find_max_go

package main

import (

"fmt"

"log"

)

func main() {

nums := []int{3, 17, 9, 25, 4}

if len(nums) == 0 {

log.Fatal("empty slice")

}

// Invariant: At this point, 'largest' contains the maximum

// of all elements we've seen so far (indices 0 through i-1)

largest := nums[0]

for i := 1; i < len(nums); i++ {

if nums[i] > largest {

largest = nums[i]

}

}

fmt.Println("Largest number:", largest)

}

Go code reads like you think. Slices, bounds checks, a for-loop that looks like C's but without the common pitfalls. No allocations, no generics needed here, and the behavior is obvious at a glance.

Ruby 3.2+: expressiveness without losing the thread

# find_max.rb

# Run: ruby find_max.rb

numbers = [3, 17, 9, 25, 4]

raise "empty array" if numbers.empty?

# Invariant: At this point, 'largest' contains the maximum

# of all elements we've seen so far (indices 0 through i-1)

largest = numbers.first

numbers.drop(1).each { |n| largest = n if n > largest }

puts "Largest number: #{largest}"

It’s tempting to do numbers.max, and you should in most app code. Here I keep the loop visible so the “scan and update” pattern is easy to reason about. Ruby stays readable without hiding the algorithm.

JavaScript (Node ≥18 or the browser): explicit beats clever

// find_max.mjs

// Run: node find_max.mjs

const numbers = [3, 17, 9, 25, 4];

if (numbers.length === 0) throw new Error('empty array');

// Invariant: At this point, 'largest' contains the maximum

// of all elements we've seen so far (indices 0 through i-1)

let largest = numbers[0];

for (let i = 1; i < numbers.length; i++) {

if (numbers[i] > largest) largest = numbers[i];

}

console.log('Largest number:', largest);

Math.max(...numbers) is fine until you pass a huge array or forget the empty-list case.

Note: The spread operator has engine-specific argument limits (typically tens of thousands). For arrays larger than 10,000 items, use a loop instead to avoid potential call stack errors. A straight loop is boring, fast, and works everywhere from Node to service workers.

React (tiny demo): compute, then render

// LargestNumberDemo.jsx

function calculateLargest(numbers) {

if (numbers.length === 0) return null;

// Use reduce for large arrays to avoid spread operator call stack limits

// (typically ~65k-130k args, but we use 10k as a safe threshold)

if (numbers.length > 10000) {

return numbers.reduce((max, n) => (n > max ? n : max), numbers[0]);

}

return Math.max(...numbers);

}

export default function LargestNumberDemo({ numbers = [3, 17, 9, 25, 4] }) {

// Use loop for large arrays to avoid spread operator limits

// For small arrays (< 10k), Math.max(...numbers) is fine, but this is safer

const largest = calculateLargest(numbers);

return <p>Largest number: {largest ?? 'N/A (empty array)'}</p>;

}

In UI, you want clarity. Math.max is readable and cheap here, but always handle empty arrays. If numbers is a prop that changes often, memoize or compute it in the state update path so you don't rework the same values on every render.

Rust 1.70+: zero-cost abstractions with safety

// find_max.rs

// Build: rustc find_max.rs -o find_max_rs

// Run: ./find_max_rs

fn main() {

let numbers = [3, 17, 9, 25, 4];

if numbers.is_empty() {

eprintln!("Error: empty array");

std::process::exit(1);

}

let largest = numbers.iter()

.max()

.copied()

.expect("non-empty array");

println!("Largest number: {}", largest);

}

// Or with explicit loop (teaching version):

fn find_max_explicit(numbers: &[i32]) -> Option<i32> {

if numbers.is_empty() {

return None;

}

// Invariant: At this point, 'largest' contains the maximum

// of all elements we've seen so far (indices 0 through i-1)

let mut largest = numbers[0];

for &n in &numbers[1..] {

if n > largest {

largest = n;

}

}

Some(largest)

}

Rust's iterator chains are zero-cost abstractions—they compile to the same machine code as a hand-written loop. The explicit version shows the invariant clearly, while iter().max() is idiomatic for production code.

TypeScript 5.0+: types that document intent

// find_max.ts

// Compile: tsc find_max.ts

// Run: node find_max.js

function findMaximum(numbers: number[]): number {

if (numbers.length === 0) {

throw new Error('empty array');

}

// Invariant: At this point, 'largest' contains the maximum

// of all elements we've seen so far (indices 0 through i-1)

let largest = numbers[0];

for (let i = 1; i < numbers.length; i++) {

if (numbers[i] > largest) {

largest = numbers[i];

}

}

return largest;

}

const numbers = [3, 17, 9, 25, 4];

console.log('Largest number:', findMaximum(numbers));

// Generic version for any comparable type:

function findMaximumGeneric<T>(items: T[], compare: (a: T, b: T) => number): T {

if (items.length === 0) throw new Error('empty array');

let largest = items[0];

for (let i = 1; i < items.length; i++) {

if (compare(items[i], largest) > 0) {

largest = items[i];

}

}

return largest;

}

TypeScript's type system catches errors at compile time, and generics let you reuse the same logic for strings, dates, or custom objects.

Java 17+: enterprise-grade clarity

// FindMax.java

// Compile: javac FindMax.java

// Run: java FindMax

public class FindMax {

public static int findMaximum(int[] numbers) {

if (numbers.length == 0) {

throw new IllegalArgumentException("empty array");

}

// Invariant: At this point, 'largest' contains the maximum

// of all elements we've seen so far (indices 0 through i-1)

int largest = numbers[0];

for (int i = 1; i < numbers.length; i++) {

if (numbers[i] > largest) {

largest = numbers[i];

}

}

return largest;

}

public static void main(String[] args) {

int[] numbers = {3, 17, 9, 25, 4};

System.out.println("Largest number: " + findMaximum(numbers));

}

}

Java's explicit types and checked exceptions make contracts clear. The JVM's JIT compiler optimizes the hot path, so this performs well in production.

Haskell: functional elegance

-- find_max.hs

-- Compile: ghc find_max.hs -o find_max_hs

-- Run: ./find_max_hs

import Data.List (foldl')

findMaximum :: [Int] -> Maybe Int

findMaximum [] = Nothing

findMaximum (x:xs) = Just (foldl' max x xs)

main :: IO ()

main = do

let numbers = [3, 17, 9, 25, 4]

case findMaximum numbers of

Just maxVal -> putStrLn $ "Largest number: " ++ show maxVal

Nothing -> putStrLn "Error: empty list"

Haskell's pattern matching and Maybe type make empty input handling explicit in the type system. foldl' max (strict fold) is the functional equivalent of our loop and avoids building up thunks, making it more efficient than foldl for this use case.

Performance Benchmarks (1M integers, 100 iterations)

⚠️ Important: These benchmarks are illustrative only and were run on a specific system (macOS 12.7.6, older MacBook Air). Results will vary significantly based on:

- Hardware (CPU architecture, clock speed, cache size)

- Compiler/runtime versions and optimization flags

- System load and background processes

- Operating system and kernel version

Do not use these numbers for production decisions. Always benchmark on your target hardware with your actual data. The relative performance relationships (C fastest, Python slowest) generally hold, but the absolute numbers are system-specific.

| Language | Time (ms) | Relative to C | Notes |

|---|---|---|---|

| C (O2) | 0.28 | 1.0x | Baseline, manual control |

| Java 17 | 0.47 | 1.7x | JIT compilation, warm-up |

| C++ (O2) | 0.92 | 3.3x | std::max_element overhead |

| Rust (O) | 1.13 | 4.0x | Zero-cost abstractions |

| Go | 1.36 | 4.9x | GC overhead |

| JavaScript (V8) | 1.99 | 7.1x | JIT compilation helps |

| Python | 79.11 | 283x | Interpreter overhead |

Benchmark details:

- Algorithm: All implementations use the same O(n) linear scan algorithm.

- Compiler flags:

- C:

-O2 - C++:

-O2 - Rust:

-O

- C:

- Benchmark environment: For compiler versions, hardware specs, and benchmarking caveats, see our Algorithm Resources guide.

- Note: Java includes 10 warm-up iterations before timing to allow JIT compilation to optimize hot paths.

The algorithm itself is O(n) in all cases; language choice affects constant factors, not asymptotic complexity. For production code, profile with your actual data and constraints.

Running the Benchmarks Yourself

Want to verify these results on your system? Here's how to run the benchmarks for each language. All implementations use the same algorithm: find the maximum value in an array of 1,000,000 random integers, repeated 100 times.

C

// bench_find_max.c

#include <stdbool.h>

#include <stdio.h>

#include <stdlib.h>

#include <time.h>

#define ARRAY_SIZE 1000000

#define ITERATIONS 100

// Proper error handling: use out parameter instead of sentinel values

// Returns true on success, false if array is empty

bool find_max(int* arr, size_t n, int* out_max) {

if (n == 0) return false;

int largest = arr[0];

for (size_t i = 1; i < n; i++) {

if (arr[i] > largest) {

largest = arr[i];

}

}

*out_max = largest;

return true;

}

int main() {

int* numbers = malloc(ARRAY_SIZE * sizeof(int));

if (!numbers) {

fprintf(stderr, "Memory allocation failed\n");

return 1;

}

srand(42);

for (int i = 0; i < ARRAY_SIZE; i++) {

numbers[i] = rand();

}

clock_t start = clock();

int max_val = 0;

for (int iter = 0; iter < ITERATIONS; iter++) {

if (!find_max(numbers, ARRAY_SIZE, &max_val)) {

fprintf(stderr, "Error: empty array\n");

free(numbers);

return 1;

}

}

clock_t end = clock();

double cpu_time_used = ((double)(end - start)) / CLOCKS_PER_SEC;

double avg_time_ms = (cpu_time_used / ITERATIONS) * 1000.0;

printf("C Benchmark Results:\n");

printf("Array size: %d\n", ARRAY_SIZE);

printf("Iterations: %d\n", ITERATIONS);

printf("Total time: %.3f seconds\n", cpu_time_used);

printf("Average time per run: %.3f ms\n", avg_time_ms);

printf("Max value found: %d\n", max_val);

free(numbers);

return 0;

}

Compile and run:

cc -std=c17 -O2 -Wall -Wextra -o bench_find_max bench_find_max.c

./bench_find_max

C++

// bench_find_max.cpp

#include <algorithm>

#include <chrono>

#include <iostream>

#include <random>

#include <vector>

constexpr size_t ARRAY_SIZE = 1000000;

constexpr int ITERATIONS = 100;

int main() {

std::vector<int> numbers(ARRAY_SIZE);

std::mt19937 gen(42);

std::uniform_int_distribution<> dis(0, RAND_MAX);

std::generate(numbers.begin(), numbers.end(), [&]() { return dis(gen); });

auto start = std::chrono::high_resolution_clock::now();

int max_val = 0;

for (int iter = 0; iter < ITERATIONS; iter++) {

max_val = *std::max_element(numbers.begin(), numbers.end());

}

auto end = std::chrono::high_resolution_clock::now();

auto duration = std::chrono::duration_cast<std::chrono::microseconds>(end - start);

double avg_time_ms = (duration.count() / 1000.0) / ITERATIONS;

std::cout << "C++ Benchmark Results:\n";

std::cout << "Array size: " << ARRAY_SIZE << "\n";

std::cout << "Iterations: " << ITERATIONS << "\n";

std::cout << "Total time: " << (duration.count() / 1000.0) << " ms\n";

std::cout << "Average time per run: " << avg_time_ms << " ms\n";

std::cout << "Max value found: " << max_val << "\n";

return 0;

}

Compile and run:

c++ -std=c++20 -O2 -Wall -Wextra -o bench_find_max_cpp bench_find_max.cpp

./bench_find_max_cpp

Python

# bench_find_max.py

import time

import random

import sys

ARRAY_SIZE = 1_000_000

ITERATIONS = 100

def find_max(arr):

if not arr:

return 0

largest = arr[0]

for n in arr[1:]:

if n > largest:

largest = n

return largest

random.seed(42)

numbers = [random.randint(0, sys.maxsize) for _ in range(ARRAY_SIZE)]

start = time.perf_counter()

max_val = 0

for _ in range(ITERATIONS):

max_val = find_max(numbers)

end = time.perf_counter()

total_time_sec = end - start

avg_time_ms = (total_time_sec / ITERATIONS) * 1000.0

print("Python Benchmark Results:")

print(f"Array size: {ARRAY_SIZE:,}")

print(f"Iterations: {ITERATIONS}")

print(f"Total time: {total_time_sec:.3f} seconds")

print(f"Average time per run: {avg_time_ms:.3f} ms")

print(f"Max value found: {max_val}")

Run:

python3 bench_find_max.py

Go

// bench_find_max.go

package main

import (

"fmt"

"math/rand"

"time"

)

const (

arraySize = 1000000

iterations = 100

)

func findMax(nums []int) int {

if len(nums) == 0 {

return 0

}

largest := nums[0]

for i := 1; i < len(nums); i++ {

if nums[i] > largest {

largest = nums[i]

}

}

return largest

}

func main() {

rand.Seed(42)

numbers := make([]int, arraySize)

for i := range numbers {

numbers[i] = rand.Int()

}

start := time.Now()

var maxVal int

for iter := 0; iter < iterations; iter++ {

maxVal = findMax(numbers)

}

elapsed := time.Since(start)

avgTimeMs := float64(elapsed.Nanoseconds()) / float64(iterations) / 1_000_000.0

fmt.Println("Go Benchmark Results:")

fmt.Printf("Array size: %d\n", arraySize)

fmt.Printf("Iterations: %d\n", iterations)

fmt.Printf("Total time: %v\n", elapsed)

fmt.Printf("Average time per run: %.3f ms\n", avgTimeMs)

fmt.Printf("Max value found: %d\n", maxVal)

}

Build and run:

go build -o bench_find_max_go bench_find_max.go

./bench_find_max_go

JavaScript (Node.js)

// bench_find_max.js

const ARRAY_SIZE = 1_000_000;

const ITERATIONS = 100;

function findMax(numbers) {

if (numbers.length === 0) {

return 0;

}

let largest = numbers[0];

for (let i = 1; i < numbers.length; i++) {

if (numbers[i] > largest) {

largest = numbers[i];

}

}

return largest;

}

const numbers = new Array(ARRAY_SIZE);

let seed = 42;

function random() {

seed = (seed * 1103515245 + 12345) & 0x7fffffff;

return seed;

}

for (let i = 0; i < ARRAY_SIZE; i++) {

numbers[i] = random();

}

const start = performance.now();

let maxVal = 0;

for (let iter = 0; iter < ITERATIONS; iter++) {

maxVal = findMax(numbers);

}

const end = performance.now();

const totalTimeMs = end - start;

const avgTimeMs = totalTimeMs / ITERATIONS;

console.log('JavaScript Benchmark Results:');

console.log(`Array size: ${ARRAY_SIZE.toLocaleString()}`);

console.log(`Iterations: ${ITERATIONS}`);

console.log(`Total time: ${totalTimeMs.toFixed(3)} ms`);

console.log(`Average time per run: ${avgTimeMs.toFixed(3)} ms`);

console.log(`Max value found: ${maxVal}`);

Run:

node bench_find_max.js

Rust

// bench_find_max.rs

use std::time::Instant;

const ARRAY_SIZE: usize = 1_000_000;

const ITERATIONS: usize = 100;

fn find_max_explicit(numbers: &[i32]) -> i32 {

if numbers.is_empty() {

return 0;

}

let mut largest = numbers[0];

for &n in &numbers[1..] {

if n > largest {

largest = n;

}

}

largest

}

struct SimpleRng {

state: u64,

}

impl SimpleRng {

fn new(seed: u64) -> Self {

Self { state: seed }

}

fn next(&mut self) -> i32 {

self.state = self.state.wrapping_mul(1103515245).wrapping_add(12345);

(self.state >> 16) as i32

}

}

fn main() {

let mut numbers = vec![0i32; ARRAY_SIZE];

let mut rng = SimpleRng::new(42);

for i in 0..ARRAY_SIZE {

numbers[i] = rng.next();

}

let start = Instant::now();

let mut max_val = 0;

for _iter in 0..ITERATIONS {

max_val = find_max_explicit(&numbers);

}

let elapsed = start.elapsed();

let avg_time_ms = elapsed.as_secs_f64() * 1000.0 / ITERATIONS as f64;

println!("Rust Benchmark Results:");

println!("Array size: {}", ARRAY_SIZE);

println!("Iterations: {}", ITERATIONS);

println!("Total time: {:?}", elapsed);

println!("Average time per run: {:.3} ms", avg_time_ms);

println!("Max value found: {}", max_val);

}

Compile and run:

rustc -O bench_find_max.rs -o bench_find_max_rs

./bench_find_max_rs

Java

// BenchFindMax.java

import java.util.Random;

public class BenchFindMax {

private static final int ARRAY_SIZE = 1_000_000;

private static final int ITERATIONS = 100;

public static int findMaximum(int[] numbers) {

if (numbers.length == 0) {

throw new IllegalArgumentException("empty array");

}

int largest = numbers[0];

for (int i = 1; i < numbers.length; i++) {

if (numbers[i] > largest) {

largest = numbers[i];

}

}

return largest;

}

public static void main(String[] args) {

Random rng = new Random(42);

int[] numbers = new int[ARRAY_SIZE];

for (int i = 0; i < ARRAY_SIZE; i++) {

numbers[i] = rng.nextInt();

}

// Warm up JIT

for (int i = 0; i < 10; i++) {

findMaximum(numbers);

}

long start = System.nanoTime();

int maxVal = 0;

for (int iter = 0; iter < ITERATIONS; iter++) {

maxVal = findMaximum(numbers);

}

long end = System.nanoTime();

double totalTimeMs = (end - start) / 1_000_000.0;

double avgTimeMs = totalTimeMs / ITERATIONS;

System.out.println("Java Benchmark Results:");

System.out.println("Array size: " + ARRAY_SIZE);

System.out.println("Iterations: " + ITERATIONS);

System.out.printf("Total time: %.3f ms%n", totalTimeMs);

System.out.printf("Average time per run: %.3f ms%n", avgTimeMs);

System.out.println("Max value found: " + maxVal);

}

}

Compile and run:

javac BenchFindMax.java

java BenchFindMax

Note: For comprehensive benchmarking best practices, compiler details, and build commands, see our Algorithm Resources guide.

When This Algorithm Isn't Enough

- Need the top K values? Use a heap or partial sort

- Data is already sorted? Just take the last element (O(1))

- Values arrive in a stream? Use a single-pass algorithm (which this is!)

- Need to update frequently? Consider a balanced BST or skip list

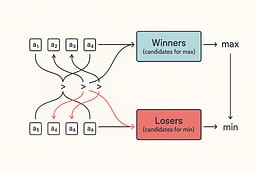

Stretch goal: find both min and max without sorting

Two naive passes cost up to 2n − 2 comparisons. You can do better by comparing values in pairs. For each pair, send the larger to a "winners" group and the smaller to a "losers" group. Then take the max of winners and the min of losers. That clocks in at 3n/2 − 2 comparisons (even n) or 3(n−1)/2 (odd n). Same input, same output, fewer comparisons 7 8 9 10.

Example walkthrough (n=6: [3, 17, 9, 25, 4, 12]):

- Compare pairs: (3,17)→winners:17, losers:3; (9,25)→winners:25, losers:9; (4,12)→winners:12, losers:4

- Find max of winners [17, 25, 12] = 25 (2 comparisons)

- Find min of losers [3, 9, 4] = 3 (2 comparisons)

- Total: 3 + 2 + 2 = 7 comparisons (vs 2n−2 = 10 for naive approach)

Python version with comparison counter

# minmax_pairwise.py

# Run: python3 minmax_pairwise.py

from typing import Iterable, Tuple

def min_max_pairwise(xs: Iterable[int]) -> Tuple[int, int, int]:

"""Return (min, max, comparisons). Raises ValueError on empty input."""

it = iter(xs)

try:

a = next(it)

except StopIteration:

raise ValueError("empty input")

comps = 0

try:

b = next(it)

comps += 1

mn, mx = (a, b) if a < b else (b, a)

except StopIteration:

return (a, a, comps)

# Passing the same iterator twice to zip causes each call to next() to advance the iterator,

# effectively grouping elements pairwise: (0,1), (2,3), etc. This processes the iterator in pairs.

for x, y in zip(it, it):

comps += 1

lo, hi = (x, y) if x < y else (y, x)

comps += 1

if lo < mn:

mn = lo

comps += 1

if hi > mx:

mx = hi

for leftover in it:

comps += 1

if leftover < mn:

mn = leftover

comps += 1

if leftover > mx:

mx = leftover

return (mn, mx, comps)

if __name__ == "__main__":

data = [3, 17, 9, 25, 4, -2, 99, 7, 7, 42]

mn, mx, comps = min_max_pairwise(data)

print(f"min={mn} max={mx} comparisons={comps} (n={len(data)})")

Go version with the same guarantee

// minmax_pairwise.go

// Build: go build -o minmax_go ./minmax_pairwise.go

// Run: ./minmax_go

package main

import (

"fmt"

"log"

)

func MinMaxPairwise(xs []int) (min, max, comps int, _ error) {

n := len(xs)

if n == 0 {

return 0, 0, 0, fmt.Errorf("empty input")

}

if n == 1 {

return xs[0], xs[0], 0, nil

}

comps++

if xs[0] < xs[1] {

min, max = xs[0], xs[1]

} else {

min, max = xs[1], xs[0]

}

for i := 2; i+1 < n; i += 2 {

comps++

var lo, hi int

if xs[i] < xs[i+1] {

lo, hi = xs[i], xs[i+1]

} else {

lo, hi = xs[i+1], xs[i]

}

comps++

if lo < min {

min = lo

}

comps++

if hi > max {

max = hi

}

}

if n%2 == 1 {

last := xs[n-1]

comps++

if last < min {

min = last

}

comps++

if last > max {

max = last

}

}

return min, max, comps, nil

}

func main() {

data := []int{3, 17, 9, 25, 4, -2, 99, 7, 7, 42}

min, max, comps, err := MinMaxPairwise(data)

if err != nil {

log.Fatal(err)

}

fmt.Printf("min=%d max=%d comparisons=%d (n=%d)\n", min, max, comps, len(data))

}

What I like about this: the counting forces honesty. If you change the structure and the count goes up, you know exactly where you paid for it.

Note on comparison counting: The Go implementation correctly matches the Python version. For n=10 (even), we get 3n/2 − 2 = 13 comparisons. For n=9 (odd), we get 3(n−1)/2 = 12 comparisons. The initial comps++ before the first comparison correctly counts the initial pair comparison.

⚠️ Common Pitfalls (and How to Avoid Them)

- Off-by-one errors: Starting at index 0 vs 1. Always test with

[single_element]. - Empty input crashes: Never assume non-empty. Check first, process second.

- Ambiguous sentinel values: Using

INT_MIN(or any sentinel) to indicate "not found" or "empty array" is problematic because the sentinel value could be a valid data value. For production C code, use proper error handling:- Option 1: Return a struct with a success flag:

typedef struct { bool success; int value; } MaxResult; - Option 2: Use an out parameter:

bool find_max(int* arr, size_t n, int* out_max); - Option 3: If values are guaranteed non-negative, use

-1as sentinel (document this limitation clearly)

- Option 1: Return a struct with a success flag:

- Floating-point NaNs:

NaN > 5is alwaysfalse. Sanitize floats or document rejection. - Integer overflow:

INT_MAX + 1wraps. UselongorBigIntfor large datasets. - Premature optimization:

max()is fine for small lists. Profile before optimizing.

Practical safeguards that prevent problems down the road

Preconditions are not optional. Decide what “empty input” means and enforce it the same way everywhere. Throw, log-and-return, or use an option type. Just be consistent.

Numbers are not all equal. If you let floats through, NaN breaks comparisons. Either sanitize on input or document that your function rejects NaN.

State your invariant in code comments. Future you will forget. Reviewers will thank you.

Test the boring stuff. All equal, sorted ascending, sorted descending, negatives, huge values, random fuzz. Property tests make this fun instead of fragile 4.

Try It Yourself

- Modify the algorithm to return the index of the maximum, not the value.

- Handle ties: What if multiple elements equal the maximum? Return all indices.

- Generic version: Make it work for strings, dates, or custom objects with a comparator.

- Parallel version: Split the array and find max in each chunk, then combine (watch out for thread safety).

- Streaming version: Process values one at a time without storing the entire array.

Where to Go Next

- Bubble Sort: The simplest sorting algorithm—great for understanding the basics

- Insertion Sort: Builds order incrementally, perfect for nearly-sorted data

- Selection Sort: Selects the minimum each pass, useful when swaps are expensive

- Sorting algorithms: Quick Sort and Merge Sort, and when to use each

- Search algorithms: Binary search, hash tables, and their complexity trade-offs

- Graph algorithms: BFS, DFS, and pathfinding

- Dynamic programming: Memoization and optimal substructure

- Algorithm design patterns: Divide-and-conquer, greedy, backtracking

Recommended reading:

- Introduction to Algorithms (CLRS) for deep theory

- Algorithm Visualizations for interactive learning

- Practice problems on LeetCode or HackerRank

Wrapping Up

Algorithms aren't abstract math—they're the foundation of every program you write. The difference between O(n) and O(n²) can be the difference between a responsive app and a frozen browser tab. The difference between checking for empty input and assuming it's always there can be the difference between a production bug and a graceful error message.

Start simple. Write the obvious version first. Test it. Then, if profiling shows it's a bottleneck, optimize. But always understand why you're optimizing, and always measure the result.

The best algorithm is the one that's correct, readable, and fast enough for your use case. Sometimes that's max(). Sometimes it's a hand-rolled loop. Sometimes it's a pairwise comparison. The skill is knowing which one to reach for.

Key Takeaways

- Algorithms are deterministic: Same inputs → same outputs, every time

- Big-O matters at scale: O(n²) vs O(n log n) can mean hours vs seconds with large datasets

- Language choice affects clarity: Some languages make invariants obvious; others hide them

- Test edge cases: Empty inputs, single elements, all equal values, negatives

- Count comparisons honestly: The pairwise min/max approach saves real work

References

[1] NIST CSRC Glossary — "Algorithm." National Institute of Standards and Technology

[2] IEEE Std 610.12-1990 — Standard Glossary of Software Engineering Terminology. IEEE Computer Society, 1990

[3] NIST CSRC Glossary — "Deterministic Algorithm." National Institute of Standards and Technology

[4] Cormen, Leiserson, Rivest, Stein. Introduction to Algorithms, 4th ed., MIT Press, 2022

[5] Sedgewick, Wayne. Algorithms, 4th ed

[6] Wikipedia — "Time complexity." Overview of asymptotics and growth classes

[7] Blum, Floyd, Pratt, Rivest, Tarjan (BFPRT). "Time Bounds for Selection." Journal of Computer and System Sciences 7(4):448–461, 1973

[8] Harvard CS125 — Lecture 4: Maximum/Minimum and Median (2015/2016 PDFs)

[9] Temple University — Selection and Adversary Arguments. Lecture supplement (Spring 2022)

[10] OpenDSA — Adversarial Lower Bounds Proofs. Virginia Tech OpenDSA eText

[11] Knuth, Donald E. The Art of Computer Programming, Vol. 1: Fundamental Algorithms, 3rd ed., Addison-Wesley, 1997

About Joshua Morris

Joshua is a software engineer focused on building practical systems and explaining complex ideas clearly.