Priority Queues: Choosing What Matters Next

Priority queues are data structures that surface the most important item first. Learn how heaps power priority queues, where they appear in real systems, and how to implement them efficiently.

When Order Isn’t Fair

Raising three kids teaches you very quickly that “first come, first served” is not a workable strategy. Needs do not arrive one at a time, and they certainly do not arrive with equal urgency. One child is hungry. Another is frustrated. A third needs help now, even though they asked last. If you try to handle these situations strictly in arrival order, something important gets missed.

Over time, my kids learned that waiting your turn is not the same thing as getting what you need. We talk instead about importance. A scraped knee outranks a question about a game. A safety issue outranks a preference. A time-sensitive problem outranks something that can wait five minutes. Without ever naming it, they learned a core systems idea: when resources are limited, the most important task should be handled next.

That lesson shows up constantly in computing for the same reason it shows up at home. Work arrives continuously. Some tasks are urgent, some are trivial, and some can safely wait. A system that tries to treat everything equally will eventually fail. A system that can consistently decide what matters most right now stays responsive.

Sorting answers a simple question: what does the final order look like? Priority queues answer a harder one: what matters most right now?

In real systems, data arrives continuously. Some tasks are urgent, some are trivial, and some can wait. A priority queue exists for this reality. It does not care about a perfect final ordering. It cares about surfacing the most important item efficiently, over and over, as priorities shift.

This is the turning point in the algorithms series. We stop treating order as a destination and start treating it as a decision.

What Is a Priority Queue?

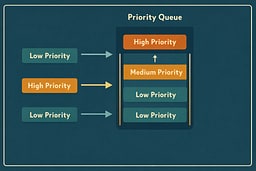

A priority queue is an abstract data structure designed around a single responsibility: repeatedly identifying the most important element in a changing set. Each element is stored alongside a priority value, and removal always returns the element with the highest priority, or the lowest, depending on the chosen convention.1 2 Unlike traditional queues, arrival order is not preserved unless it aligns with priority.

This distinction matters because priority queues are not concerned with history. They do not attempt to remember who arrived first or how long something has been waiting. Instead, they operate entirely in the present, answering one question over and over again: what deserves attention next? That framing makes priority queues fundamentally different from data structures that exist to preserve or represent complete order.

Every priority queue implementation supports three core operations. Elements must be insertable at any time. The most important element must be observable without removal. And the most important element must be removable efficiently. Everything else, including how priorities are stored or compared, is implementation detail. The abstraction is intentionally narrow because systems that rely on priority queues need reliability more than flexibility.

Why Heaps Power Priority Queues

Priority queues are most commonly implemented using binary heaps because heaps provide exactly the guarantees the abstraction requires, and no more. A binary heap enforces a single invariant across its structure: every parent node dominates its children according to the chosen ordering rule.1 3 That invariant ensures the most important element is always located at the root.

In a max heap, parents are greater than or equal to their children, making the maximum element immediately accessible. In a min heap, the relationship is reversed. Crucially, this guarantee holds without fully sorting the structure. Only the relationships required to keep the root correct are maintained, which allows insertions and removals to be performed in O(log n) time while peeking remains O(1).1

Heaps strike a deliberate balance between structure and flexibility. They impose enough order to make priority visible, but not so much that the system wastes time maintaining relationships that will never be queried. This is why heaps appear repeatedly throughout this series: they are not clever tricks, but disciplined compromises that show up wherever responsiveness matters more than completeness.

Priority Queues vs Sorting

It is tempting to think of a priority queue as a partially sorted list. That intuition is understandable, but it is wrong in an important way. Sorting algorithms assume that all relevant data is available before processing begins and that the final arrangement of every element matters. Priority queues assume neither.

In systems that rely on priority queues, data arrives continuously and unpredictably. New elements can appear at any time, and the system may never need to know the complete ordering of everything it has seen. Maintaining a fully sorted structure in this environment is not just inefficient, it is wasteful. Work is performed to preserve relationships that will never influence a decision.

Priority queues make a different tradeoff. They invest a small amount of work on every insertion and removal to ensure that the next decision is always cheap. By refusing to care about final order, they remain fast, responsive, and adaptable. This shift—from preserving order to enabling decisions—is what makes priority queues foundational in real systems rather than academic exercises.

Core Operations (Conceptually)

At the conceptual level, priority queues are intentionally simple. Their power comes not from complexity, but from discipline. Each operation exists to preserve the heap invariant while doing as little work as possible.

insert(item, priority):

add item to heap

restore heap property

peek():

return root element

extract():

remove root element

move last element to root

restore heap property

Insertion adds a new element and restores the heap property so that priority remains visible. Peeking returns the root element without modifying the structure, allowing systems to inspect urgency without committing to action. Extraction removes the root, replaces it with the last element, and re-heapifies to restore order. No operation attempts to do more than is required to keep the next decision correct.

This restraint is what makes priority queues predictable. When performance degrades, it does so logarithmically and transparently. There are no hidden linear scans or deferred reorganizations. Every cost is paid explicitly, at the moment it matters.

Implementations in Practice

Python

import heapq

pq = []

heapq.heappush(pq, (1, "low"))

heapq.heappush(pq, (0, "high"))

heapq.heappush(pq, (2, "background"))

priority, task = heapq.heappop(pq)

Python's heapq implements a min heap. Priority inversion is handled by convention.4

JavaScript (Node.js)

class PriorityQueue {

constructor() {

this.heap = [];

}

enqueue(value, priority) {

this.heap.push({ value, priority });

this.heap.sort((a, b) => a.priority - b.priority);

}

dequeue() {

return this.heap.shift();

}

}

This naive approach works for small data sizes but quickly becomes inefficient, which is why real systems reach for heaps.

Go

type Item struct {

value string

priority int

}

type PriorityQueue []*Item

func (pq PriorityQueue) Less(i, j int) bool {

return pq[i].priority < pq[j].priority

}

Go's container/heap package formalizes heap behavior and keeps the abstraction honest.5

C++

#include <queue>

#include <vector>

std::priority_queue<int, std::vector<int>, std::greater<int>> pq;

pq.push(3);

pq.push(1);

pq.push(2);

C++ treats priority queues as a first-class citizen in the standard library.6

Benchmarks

Performance Benchmarks (1,000 elements, 10 iterations)

WARNING: Important: These benchmarks are illustrative only and were run on a specific system. Results will vary significantly based on:

- Hardware (CPU architecture, clock speed, cache size)

- Compiler/runtime versions and optimization flags

- System load and background processes

- Operating system and kernel version

- Data characteristics (random vs. sorted vs. adversarial)

Do not use these numbers for production decisions. Always benchmark on your target hardware with your actual data. The relative performance relationships generally hold, but the absolute numbers are system-specific.

| Language | Time (ms) | Relative to C | Notes |

|---|---|---|---|

| C (O2) | 0.083 | 1.00x | Custom binary heap, Apple clang 14 |

| C++ (O2) | 0.061 | 0.74x | std::priority_queue |

| Go | 0.300 | 3.61x | container/heap (go1.25.3) |

| Rust (O) | 0.030 | 0.36x | BinaryHeap |

| Java 17 | 1.658 | 19.98x | PriorityQueue, JVM warm-up limited |

| JavaScript (V8) | 3.571 | 43.02x | Node.js 22.18.0, custom heap |

| TypeScript | 3.219 | 38.78x | tsc 5.9.3, same heap in Node |

| Python | 1.485 | 17.89x | heapq (CPython 3.9.6) |

| Ruby | 3.883 | 46.78x | Custom binary heap |

Benchmark details:

- Algorithm: Min-heap priority queue. Each iteration inserts 1,000 items, then extracts all 1,000 items.

- Test data: Deterministic LCG-seeded values (same dataset across languages), 1,000 elements, 10 iterations.

- Benchmark environment: macOS 12.7.6; Apple clang 14.0.0; Rust 1.91.0; Go 1.25.3; OpenJDK 17.0.17; Node 22.18.0; TypeScript 5.9.3; Python 3.9.6; Ruby 2.6.10.

- Environment notes: For hardware specs and benchmarking caveats, see our Algorithm Resources guide.

The pattern mirrors our Heap Sort benchmarks: compiled languages dominate, and dynamic runtimes pay for allocation, boxing, and dispatch overhead. The fact that Rust edges C here is not magic - the standard library heap is highly optimized and the input size is small enough that inlining and bounds checks matter more than algorithmic differences.

Where Priority Queues Appear in Real Systems

Priority queues appear wherever systems must make repeated decisions under pressure. Operating system schedulers rely on them to allocate CPU time among competing processes. Network stacks use them to ensure time-sensitive packets are transmitted before bulk data. Event loops and job queues depend on them to keep applications responsive even as background work accumulates.

Graph algorithms such as Dijkstra’s shortest path make their reliance on priority queues explicit.7 Each step selects the next most promising vertex based on accumulated cost, not arrival order. In every case, the pattern is the same: priority queues allow systems to respond intelligently without waiting for perfect information.

Limitations and Tradeoffs

- Priority queues are not stable by default; equal priorities may reorder.

- They do not support efficient arbitrary removal unless you add indexing or handles.

- They optimize one question only: what should happen next?

These are constraints, not accidents. A priority queue is honest about what it does well.

Priority in Practice: Watching a Queue Make Decisions

Sorting assumes the world waits. Priority queues assume it does not

They model computation the way life actually works: information arrives late, urgency is uneven, and choices must be made before everything is known. In that environment, perfect order is not just unnecessary—it is a distraction. What matters is having a reliable way to decide what deserves attention next, even as new demands continue to arrive.

Once you understand priority queues, heaps stop being a clever implementation detail and start looking like a discipline. They enforce just enough structure to keep systems responsive without wasting effort on order that will never be used. Whether you are managing tasks in an operating system, routing packets across a network, or teaching three kids how to share your attention, the same lesson applies.

Order is no longer something you finish. It is something you choose, again and again, under real constraints.

References

[1] Cormen, Leiserson, Rivest, Stein. Introduction to Algorithms, 4th ed., MIT Press, 2022

[2] Wikipedia - "Priority queue." Definition, operations, and implementations

[3] Wikipedia - "Binary heap." Heap invariant and complexity overview

[4] Python Docs - heapq module

[5] Go Docs - container/heap package

[6] cppreference - std::priority_queue

[7] Wikipedia - "Dijkstra's algorithm" (priority queue use case)

About Joshua Morris

Joshua is a software engineer focused on building practical systems and explaining complex ideas clearly.